For beginners, SEO is usually associated with keyword optimization. You do the keyword research for your website, optimize your content around those keywords, then your pages get ranked in the search results for the keywords. Unfortunately, that's just the tip of the SEO iceberg.

Thanks to advancements in machine learning and data science, search engine algorithms are becoming more “intelligent" and “demanding". Google has officially made page experience an important ranking factor with the announcement of the new Core Web Vitals. Therefore, ranking signals other than content and keywords should acquire more attention.

On the flip side, there are technical aspects that you may want an SEO expert to take care of. However, before beginning your search for a consultant, it's better off to become an educated consumer and get yourself familiar with how search engines work. Keep on reading to get accustomed to SEO factors besides keywords, so you can choose to carry out a simple SEO audit yourself or hire a professional.

Optimize crawlability

The starting point of SEO doesn't begin with keyword research as many people assume. In fact, search engines can’t rank a site if they can’t find it. In most cases, the search bots will automatically do the crawling behind the scene. Still, there are some problems that happen midway through the process and prevent the bot from crawling your site.

P.s: You can check Crawl Stats report in the Search Console for issues with crawling.

Issues with your site

To make your website accessible via the World Wide Web, you must use a web hosting service that takes advantage of servers. As a result, when the servers experience downtime, Internet users can't visit your website and search engines can't communicate with your site. Server issues can be rooted in the technical problems of the hosting providers or it can happen because you exceed the bandwidth limitation of the server (for example, there are so many visitors entering your website that the server can't handle it).

In these cases, issues are usually temporary and there is nothing much you can do. Switching to another hosting system is an option. However, keep in mind that outages occur even in top-tier hosting providers like Amazon Web Services. Also, the search engine bots will come back to your website later and crawl your site anyway.

Another issue related to your site, but you can actually fix it, is flaws in the coding. Googlebot scans the robots.txt file first when it meets your site to identify which pages are subject to indexing, and which pages are not. If it can’t reach the robots.txt file, the crawling will be postponed until it can reach the file. Hence, make sure you (or the website developer) include robots.txt in the coding.

Issues with crawling budget

In the crawling stage, you need to pay attention to the crawl budget. Crawl budget is the number of pages Googlebot crawls and indexes on a website within a given timeframe. If your number of pages exceeds your site’s crawl budget, you’re going to have unindexed pages on your site.

Duplicate content, infinite spaces, and error pages usually make your site transcend the crawl limit.

Duplicate content

This happens when numerous URLs point to the same article/product. For example, I have a Shopify store, I put product XYZ in both collection A and collection B. Based on the collection path, there will be mysite.com/collections/a/products/xyz and mysite.com/collections/b/products/xyz, both of them can get visitors to product XYZ. Also, the original product URL when I upload it to my Shopify store mysite.com/products/xyz can do it. The more collections a product is in, the more URLs it generates.

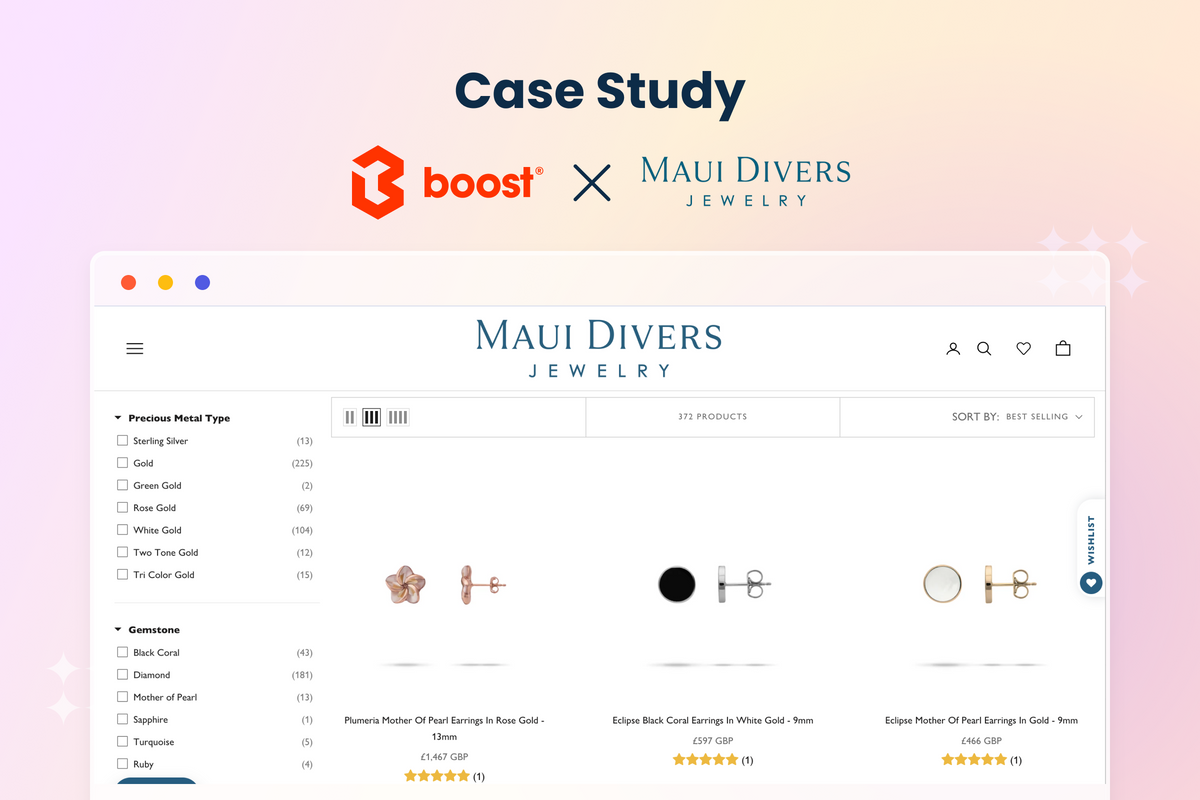

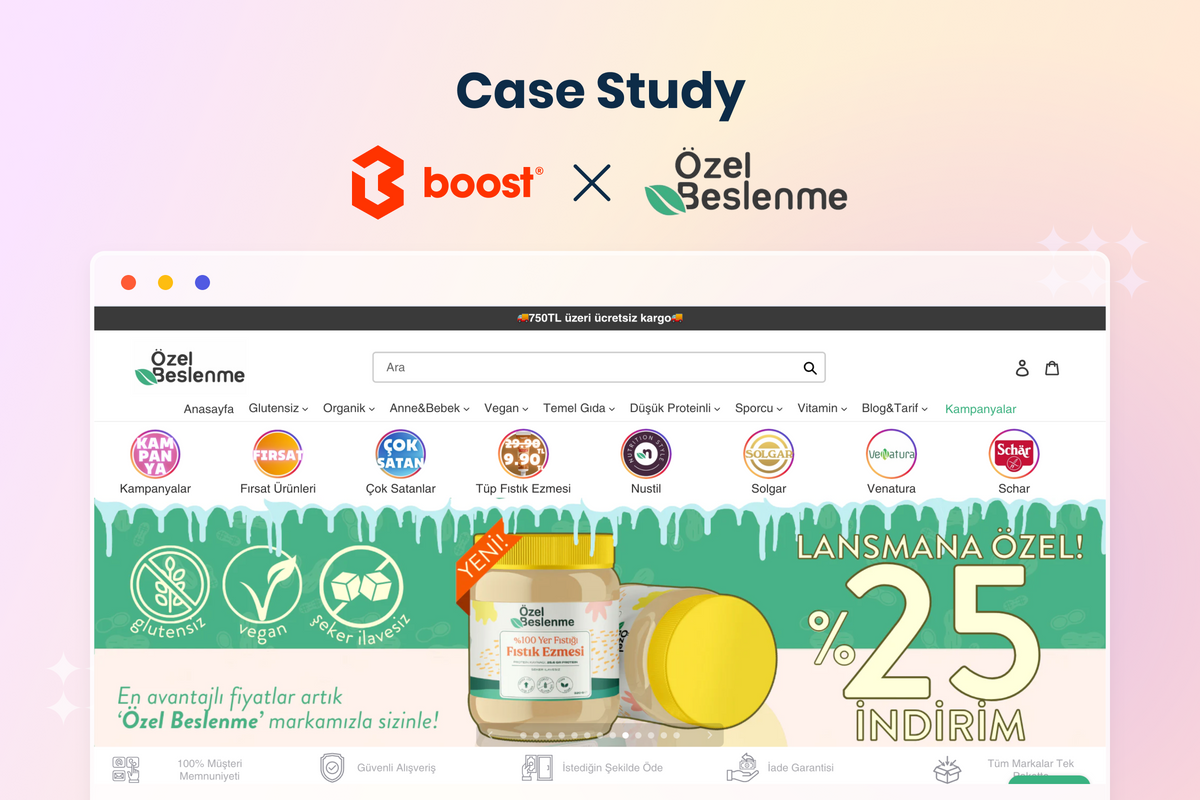

You can simply solve this problem by using canonical URLs. Adding parameter rel="canonical" is the recommended way of consolidating duplicate content for search engines. Some Shopify apps like Boost Product Filter & Search allow users to use canonical URLs for product pages. It automatically replaces URLs with collection paths with the original product URLs so you don't need to put a finger in the coding.

Furthermore, it’s always good to remove unnecessary URL parameters and keep them as clean as possible. New parameters can be added to the original URL product page to signal the variants. In such cases, use standard parameters with "key=value&" pairs.

When choosing product variants on Uniqlo’s online store, it creates new product URLs with “search-bot-friendly” parameters. Because the variants here are color and size, the parameters look like this "colorCode=value&sizeCode=value". (Source: Uniqlo)

You can also choose not to let search engine bots crawl and index the variants' URLs by submitting a Sitemap with the canonical version of each URL, adding rel="nofollow" to internal links, using Robots.txt disallow. These things are somewhat technical so we won't explain how to do it in detail in this blog post. You can head here to know more.

Using 301 redirects is another effective approach to duplicate content. The 301 indicates that the redirects are permanent and they are “a strong signal that the redirect target should be canonical”.

Infinite spaces

There are some URLs that provide little or no value to searchers and search engines. A new URL that appears every time visitors click a filter option or sorting option wastes a lot of your crawling budget.

You can use JavaScript to dynamically sort/filter/hide content without updating the URL. This one is a bit technical. If you are using a third-party product filter app, check with them to see if they offer it or whether they can help users customize it.

Different URLs for filter options are unnecessary so Uniqlo chooses not to update the URL when customers use the product filter. Here when you refine the T-shirts collection by size and by color, the URL remains as the collection URL. (Source: Uniqlo)

Using the same guidelines as adding rel="nofollow" to internal links, using Robots.txt disallow can also do the work, but you need to consider the difference between duplicate content and infinite spaces. Regarding the former, all the URLs are accessible but you tell Googlebot to pick one of them as canonical or preferred. However, in terms of infinite spaces, you may not want the URLs to exist at all because there are too many combinations.

Simply changing the order of the filter options you click produces a new URL. This will take up a lot of the crawl budget. (Source: Sephora)

Soft error & hacked pages

When error and hacked pages get crawled by Googlebot, it uses your crawl budget for no good reason. You can identify broken pages by using Ahrefs or Search Console.

After getting the list of error pages, it's time to understand the problems and prepare solutions.

For the soft 404s, determine whether the URLs are:

- Old URLs that have been changed. In this case, you can use a redirect to point the outdated URLs to more accurate ones.

- Pages that don't exist and return a 404 or 410 response. With these errors, you can choose to customize your 404 pages to aid your users as they look for something that leads to the error pages.

Hacking is a more serious problem that needs to be fixed ASAP. There are several ways to know if your site is hacked:

- You’ve been alerted by Google

- You see a warning when searching for your website in the external search engines stating that the site is hacked or compromised

- There are suspicious activities on your web pages such as unusual pop-ups, layout changes, content disappearing, etc.

If you get these signals, go to the Security Issues section of Search Console and look for example URLs where Google detected that your site has been hacked. In case you suspect that a page, not a whole site is vulnerable to malware, use URL Inspection on Search Console.

In many circumstances, hackers use sophisticated tricks to cover the hacked content which the Search Console can't identify. Therefore, Google gives us the Hacked Site Troubleshooter to locate all the hacked content on a site.

Enhance site security

Cybersecurity is of the utmost importance for online transactions. That's why Google puts it as the top priority of their search engine. The use of HTTPS encryption has been confirmed to be a ranking signal among hundreds of unofficial ranking factors.

Sites with HTTPS encryption imply that they have Secure Sockets Layer (SSL) certificates. This creates a secure connection between a website and its users, adding an extra layer of security that protects information exchanged between them.

If your website URL begins with HTTP, not HTTPS, it implies your site is less secure so Google and other search engines will give you a lower ranking in the SERPs. An SSL certificate can be issued by a certificate authority, for example, IdenTrust or GoDaddy.

For Shopify merchants, the store's checkout and any content that's hosted on .myshopify.com domain will have SSL certificates activated by default.

Reinforce domain authority

There can be millions and even billions of results for a search term, so how do Google and other search engines know which URL to put in the first place? Beyond keyword matching, it's the most reliable sources available out there that get prioritized in the SERPs. Expertise, authoritativeness, and trustworthiness on a given topic are what the search bots look for to decide the ranking of the pages.

An overview of the domain authority report on Moz. Higher scores correspond to a greater likelihood of ranking. (Source: HubSpot)

Moz, Ahrefs, Semrush are among a myriad of tools to help you know your domain authority score. It's an excellent indicator to assess your website's performance compared to that of your competitors. Indeed, domain authority is not a direct ranking factor. However, Google suggested that this long-term strategy can “differentiate yourself from other people… and really show the additional value that you provide, and make sure Google and other search engines recognize that additional value”.

Having a high-quality backlink profile is a well-known tactic to increase domain credibility. It's often considered as an off-page SEO factor to build up the number and quality of links pointing to your site, which can have a significant impact on your search ranking. You can write guest blog posts or offer backlink exchange to begin with backlink building. Contacting KOLs or influencers in your industry to endorse your site URLs on their posts is also a good practice but it's more difficult since experts usually agree to feature big brands.

Another thing to take into careful consideration when executing backlink strategies is it’s only useful to have backlinks in reputable websites in your sector. The SEO tools that help you check your domain authority can also run the check for other websites too. So, pick one and make the most out of it for your backlink building.

A simpler way to make your business more credible is to create consistent business listings. A Google My Business page is definitely a must-do on the checklist. You can also search for industry-related directory sites like BOTW (Best of the Web) or AboutUs, and set up business profiles on them. Make sure the business name, address, and phone number are used consistently across all business profiles.

Don’t forget to take advantage of user-generated content (UGC) on both social channels and your main site. A system of basic attributes including keywords, titles, backlinks, and internal links can assist with your SEO. With reviews and feedback, it's the customers that do all the work for you. That’s why the majority of websites feature a testimonial section on their homepage and leading e-Com stores always show product reviews from buyers.

Put page experience in the first place

With the update of new Core Web Vitals, we got the official announcement from Google that “We'll begin using page experience as part of our ranking systems beginning in mid-June 2021”. This reaffirms that user experience is as essential as content and keywords for ranking law in search engines.

There are two different groups of factors that go into the so-called page experience.

- Boolean Checks: Mobile-friendliness, Safe browsing, No intrusive interstitials

- Core Web Vitals: First Input Delay, Large Content Paint, Cumulative Layout Shift

In the scope of this article, we will analyze the first groups. Click here for new core web vital guidelines.

Mobile-friendliness

People are, without a doubt, preferring to surf the Internet via smartphones and tablets. M-commerce is a huge yet competitive advantage for online businesses with nearly two-thirds of time spent in e-retail coming from mobile devices. Search engines know this trend so they tend to give mobile-friendly sites a better position in the SERPs.

Mobile-friendliness refers to the adjustability of the content according to the screen size. Besides testing your site on a variety of devices, you should use Search Console to get a list of URLs that are not mobile optimized.

(Source: Search Console)

PageSpeed Insights is another free tool that not only identifies problems with the website's mobile version but also gives suggestions or opportunities of what you should do to improve the page experience.

Related: Tips to optimize M-commerce performance

Safe browsing

To be classified as safe browsing involves more actions than just using a domain starting with HTTPS. It's your attempt to help visitors of your site avoid phishing attacks and other cyber crimes.

The first and foremost thing you should do is to follow regulations for e-commerce security, for example, Payment Card Industry Data Security Standards for any websites that accept online card payment, GDPR for online stores operating in the European Union and European Economic Area.

Shouldn’t miss: E-commerce Security: Things Shopify Merchants Should Know and Follow

In addition to adherence to the regulations for your site, you need to make sure third-party apps or plugins stick to these rules too. Content management systems like WordPress and Shopify usually have high standards and expectations for public apps on their platforms. However, misdeeds can happen behind the curtains so do look out for data collecting activities from any of your extensions.

If your site is marked as insecure, Google displays a notice that reads "This site may harm your computer" to visitors, which will definitely drive them away. Again, check the Security Issues in Search Console to fix the problem that causes your website to be infected. If your website gets flagged by mistake or after it is clean and secure, you should request a review on the same page to quickly remove the warning notification.

Intrusive interstitials

Interstitials are pop-up, overlay, and anything that blocks most or all of a page. Blocked view leads to a bad user experience for both desktop and mobile users so Google imposes serious minus points for that.

Still, not all interstitials are bad. It’s common to use a welcome pop-up with discounts and subscription info for first-time visitors when they enter an online store. According to Search Engine Journal, Google just looks for and gives bad marks for “interstitials that show up on the interaction between the search click and going through the page and seeing the content”.

Hence, we can understand that Intrusive interstitials are those that take up most or all of the content on a page, are not responsive, and are not triggered by an action. Also, it’s important to note that Google still keeps a secret about the intrusive interstitial penalty and considers it “a softer negative ranking factor”.

To avoid intrusive interstitials, you should:

- Rather use small messages such as banners, inlines, and slide-ins for mobile versions.

- Identify required interstitials, such as age-verification pop-ups and cookie notifications. These ones don't get penalized so you can use them freely.

- Check all other pop-ups from apps and plugins (i.e: coupons offering, membership programs), and evaluate them according to the amount of space they take up. You may want to resize them if they block the port view of users.

Before you leave

With the onset of the pandemic, a burgeoning number of brick-and-mortar stores accelerate their digital transformation as a sure-fire way to thrive. The top priority in this process is always search engine optimization (SEO).

However, the traditional approach and black-hat SEO tactics with keywords stuffing will no longer work. It’s high time for you to optimize crawlability, site security, domain authority, and page experience to achieve a better ranking in SERPs.